Why do state governments want data centres?

In today’s Finshots, we talk about the strange economics of data centres and why governments are subsidising them so aggressively.

But before we dive in, here’s a quick sidenote: We’re hiring a Content Writer at Ditto Insurance. If you like turning messy, complex topics into clear, helpful content that actually ranks (and gets read), and enjoy going beyond surface-level research, this might be for you. Check out our careers page for more or share it with someone who’d be a great fit.

Now on to today’s story.

The Story

Nowadays, data centres are increasingly viewed as critical infrastructure, just like airports or power plants. And historically, large infrastructure projects mean one thing: jobs.

Let’s take airports as an example. A new airport can directly employ thousands of people across operations, logistics, retail, etc.

So, from the government’s perspective, if you build massive infrastructure, jobs will follow.

In line with this narrative, several states are competing aggressively to attract hyperscale server parks by offering cheap land, subsidised electricity, and even expediting approvals that normally take months.

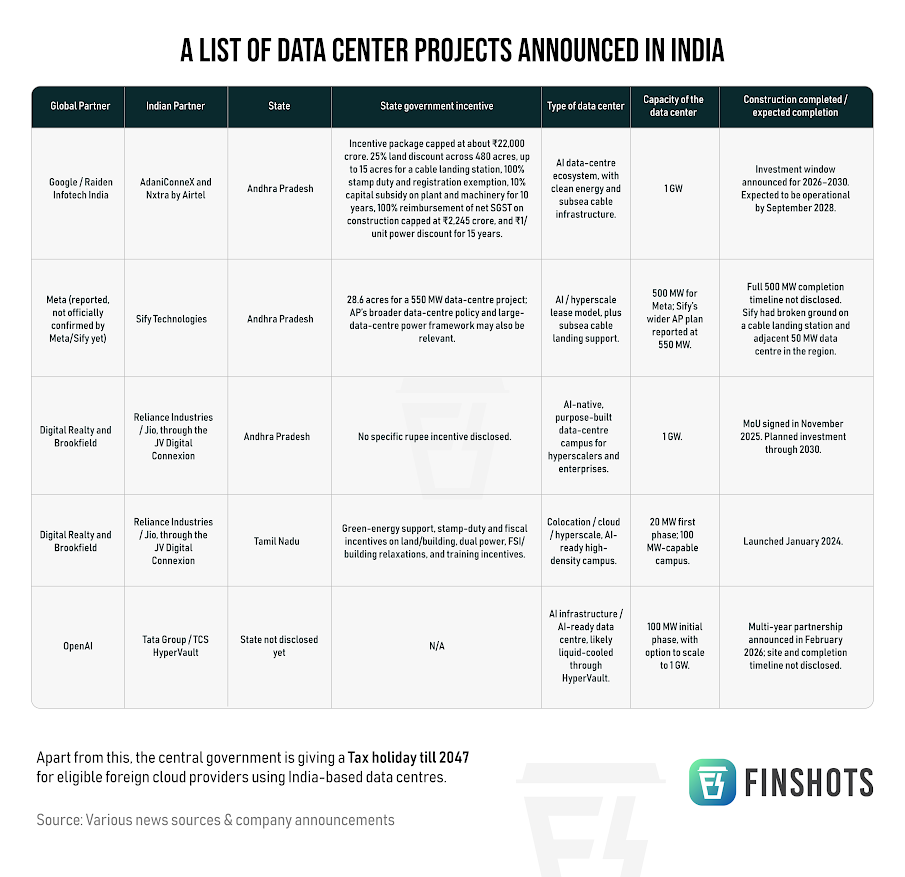

To understand how aggressive this buildout has become, take a look at some of the major projects announced across India:

Andhra Pradesh’s government has gone even further in framing these projects as large-scale employment engines. State officials recently claimed that Google’s proposed $15 billion data-centre investment could generate nearly 1.88 lakh direct and indirect jobs.

On paper, numbers like these make the sector look comparable to earlier industrial booms that transformed local labour markets. But once you look more closely at how data centres actually operate, the math becomes far less straightforward.

To understand this, let’s take a look at what data centres actually are.

One can call it infrastructure, or factories, sure. However, its value does not come from human labour, but from computing density, energy access, and uptime reliability.

This gap between perception and reality becomes clearer when you look at global studies on hyperscale infrastructure. McKinsey estimates that a typical large data centre, around 2,50,000 sq ft, may require up to 1,500 workers during peak construction activity. However, once the facility becomes operational, permanent employment can fall to as few as around 50 on-site jobs.

Of course, supporters of the industry argue that direct jobs alone miss the bigger picture. Construction activity creates spillover demand for logistics, maintenance, catering, equipment suppliers, telecom infrastructure, and local services. McKinsey estimates that every direct on-site job can support roughly 3.5 additional jobs in the surrounding economy through indirect and induced effects.

Even in India, industry estimates follow a similar pattern. Financial Express reports that hyperscale projects may create 400–600 full-time jobs per 100 megawatts during the construction phase, with long-term operational staffing closer to 100–200 per 100 megawatts once the facility is complete.

This is why claims of nearly 1.88 lakh jobs from a single project naturally raise eyebrows.

And this is not just theory. To better understand this, let’s take a look at a practical example.

Economist Michael J. Hicks studied local labour markets in Texas and reached a striking conclusion: data centres generate virtually no net long-term job growth for host communities. While they do generate what economists call “gross jobs” during construction and setup, those gains are often offset elsewhere in the local economy.

So if jobs are not the real story, what exactly are governments chasing?

Well, increasingly, governments view computing infrastructure the way earlier generations viewed ports, railways, oil pipelines, or telecom networks. Nowadays, AI models, digital payments, defence systems, cloud platforms, and national cybersecurity all depend on computing infrastructure. Whoever controls computing capacity controls a critical layer of the future economy. That changes the equation entirely.

Under this framework, data centres are not primarily employment projects, even though they are portrayed as such during announcements. They are considered strategic assets, and hosting them signals technological seriousness. It attracts cloud ecosystems. It improves digital sovereignty. And it positions the country closer to the centre of the AI economy.

However, this strategy also comes with its own risks.

There is an increasing concern that regions could become little more than “compute landlords” for global tech giants. After all, countries end up supplying the land, electricity, water, and subsidies while the real intellectual property, profits, and innovation remain concentrated elsewhere.

Without parallel investments in semiconductor ecosystems, AI research, advanced manufacturing, and talent development, countries risk capturing only the lowest layer of value creation. And that lowest layer is extremely resource-intensive.

Modern AI data centres consume staggering amounts of electricity because high-end AI chips operate at extremely high temperatures and require continuous cooling. Supplying that power places enormous strain on local grids and transmission infrastructure. Transformers degrade faster under constant heavy loads. Electricity demand spikes. In some cases, surrounding communities may even face higher power costs indirectly. Globally, AI infrastructure could require an additional 652 TWh (Terrawatt Hours) of electricity generation capacity by 2030.

Water usage is also equally controversial. A single 1-gigawatt data centre can consume 5 million gallons of water per day for cooling. In water-stressed regions such as parts of India, this creates obvious political and environmental tensions. There have been concerns in Visakhapatnam that multiple AI facilities drawing from shared reservoirs could worsen groundwater depletion.

This is why resistance to data centres is rising globally.

Local communities increasingly question whether highly automated server warehouses justify decades of tax breaks, rising electricity demand, and pressure on scarce water resources, especially when the long-term employment benefits appear limited.

And this is where the story becomes even more interesting. Because the industry itself may already be searching for alternatives to gigantic centralised campuses.

One emerging idea is distributed computing. Instead of concentrating AI servers inside massive facilities, companies are experimenting with placing mini-computing nodes directly inside residential areas. A California-based utility-tech company called Span is piloting this, in which they monitor unused power in real time and redirect that spare capacity to AI workloads running locally. In return, homeowners receive subsidies on electricity and broadband bills.

In theory, this could reduce the need for gigantic centralised data centres consuming huge amounts of land, water, and grid infrastructure. But the model remains highly experimental, and heavy AI workloads on residential grids could, again, strain local grids and raise local electricity costs.

Still, the fact that companies are experimenting with putting AI servers in people’s backyards tells you how desperate the global search for computing power has become.

And perhaps that is the real story underneath the data centre boom.

Earlier industrial revolutions created economic growth by directly employing massive numbers of workers. However, modern digital infrastructure creates value differently. Data centres resemble ports, highways, or electricity grids far more than factories. Their importance lies not in how many people they hire directly, but in the ecosystems they enable around them.

That is why governments continue chasing them despite weak employment numbers. They are betting that whoever hosts the infrastructure today may eventually attract the AI startups, cloud ecosystems, software platforms, and strategic relevance of tomorrow.

But this strategy only works if countries move higher up the value chain.

India cannot become merely the world’s backend warehouse for global cloud giants. The real value lies higher up the stack: semiconductor design, AI models, advanced chips, and overall, better innovation through investments in R&D.

And perhaps the broader lesson goes beyond data centres altogether.

Governments often rush to subsidise the visible symptom rather than solve the underlying problem. The symptom today is the exploding demand for computing capacity. But the underlying issues are inefficient energy systems, increasingly compute-hungry AI models, centralised internet architecture, and the growing mismatch between digital demand and physical infrastructure.

Building more data centres may temporarily relieve the pressure, much like widening roads only temporarily reduces traffic congestion. But unless we also invest in cleaner grids, energy-efficient chips, and better infrastructure, demand for compute will continue rising faster than supply.

So, the future may not belong to the countries that solely build the most data centres. It will belong to those who fundamentally invest in research & development.

Until next time…

If you liked this story, feel free to share this with your friends, family or even strangers on WhatsApp, LinkedIn or X.

Also, if you’re someone who loves keeping tabs on the world of business and finance, hit subscribe if you haven’t already. And if you’re already a subscriber, thank you! Maybe forward this to someone who’d enjoy our stories but hasn’t discovered us yet.