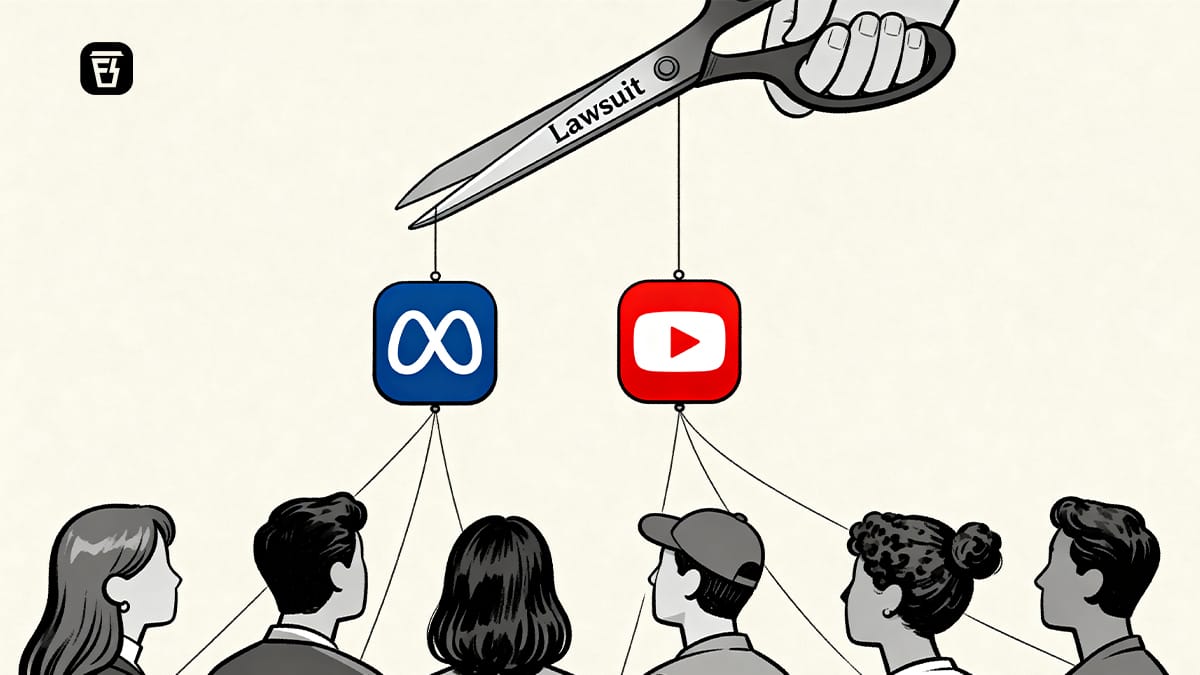

Why Instagram, Facebook and YouTube got sued

In today’s Finshots, we explain why Meta and YouTube were brought to court over their platforms.

Also, here’s a quick sidenote before we begin. This weekend, we’re hosting a free 2-day Insurance Masterclass that helps you build real financial security by understanding health and life insurance the right way.

📅 Today, 31st March at 6:30 PM: Life Insurance

How to protect your family, choose the right cover amount, and understand what truly matters during a claim.

📅 Tomorrow, 1st April at 6:30 PM: Health Insurance

How hospitals process claims, common deductions, the mistakes buyers usually make, and how to choose a policy that won’t disappoint you when you need it most.

👉🏽 Click here to register while seats last.

Now, on to today’s story.

The Story

More often than not, most of us find ourselves in a situation like this. You open Instagram for a few minutes. One reel turns into five. Five turn into twenty. And before you realise it, half an hour has gone by. Nothing forced you to stay. You could have left at any time.

At least, that’s how it feels.

For years, social media platforms like Instagram and YouTube were seen as a neutral space. It’s where everybody could join, interact and share their pictures and videos from their life, and at the same time, find out the happenings of the world and their closest friends.

And the best part is that it’s completely free of cost. No subscriptions and no upfront cost. All you needed was an internet connection and a device.

But of course, nothing is truly free. Because while users like you and me weren’t paying with money, we were with something else. Our time, our attention and eventually, our behaviour around the platforms themselves.

You would assume that since these platforms do not create most of the content themselves, they are neutral by design. That whatever impact they have depends entirely on what people choose to watch.

But that assumption misses something important.

Behind the scenes, these platforms were doing more than just hosting content. They were learning about us. Every scroll, pause, and like became a signal. Over time, those signals began shaping what users saw next, how long they stayed, and how often they came back.

Which raises a more uncomfortable question.

If platforms are not just showing content, but actively shaping behaviour, can they still be considered passive?

That question was at the centre of a Los Angeles courtroom battle recently.

Last week, both Meta who owns Instagram and Facebook and Google who owns YouTube were sued by a young woman who argued that these platforms were not just engaging, but deliberately addictive. She claimed that using them from a very young age led to usage patterns that in turn, led to serious mental health issues.

The companies pushed back and argued that there is no such thing as an ‘addictive platform’. After all, there are billions of users who use their products on a daily basis. And there’s the matter of choice. However long a user decides to stay is their personal choice and responsibility, not product design itself. So in many ways, their argument sounded intuitive.

Let’s understand it with an example of your favourite restaurant. Now as good as the food is, there is a very low chance you would call it addictive. And even if you kept going back, it would be strange to expect the restaurant to tell you to stop.

But the court did not see social media the same way.

A restaurant serves you when you walk in. It does not adapt in real time or place another dish on your table the moment you finish one. Social media platforms do.

So why do we continue to scroll?

Because the platforms are doing more than showing content. They are actively guiding what you see and how long you stay.

The features like infinite scroll, autoplay and of course algorithmic recommendations weren’t seen as neutral tools. Rather, it was seen as a system that removed the natural stopping points. That means there’s no clear place to pause and definitely no end to reach.

Each swipe does not just show the next post or video. It offers a possibility. Maybe the next one is funnier, more interesting and more relevant.

Most of the time, it is not. But every once in a while, it is.

And that unpredictability is what keeps the loop going.

In court, experts described this as a system built on variable rewards. An unpredictable mix of content that keeps users chasing the next “hit”. This kind of behaviour is not new. Casinos are designed to keep people playing. Shopping malls are designed to keep people browsing. Both use subtle cues to extend how long you stay, often without you noticing it.

But this case went beyond how these systems work. Multiple studies in the past have proved it as much. It was about the choices the companies made, despite what they knew.

Years ago, Frances Haugen, a former Facebook employee and whistleblower, had already suggested that platforms were aware of the trade-offs between engagement and user well-being. What was once an allegation has now been argued as evidence.

Which meant the question was no longer just about what these platforms did to users. It was about the decisions they made anyway.

And that difference changed everything.

If you remember, in one of our recent stories, we talked about regulating attention and why that was difficult compared to alcohol or tobacco because attention is tied to speech and commerce, among other things.

For years, platforms like Meta and YouTube had a powerful legal shield protecting them. It is called Section 230, and it was built on a simple idea. Since these platforms do not create the content themselves, they cannot be held responsible for it. It is why Facebook was not liable for hate speech on its platform, and why YouTube was not liable for radicalisation videos.

But this case never challenged the content. It challenged the design.

And Section 230 was simply not built to answer for that. It protects platforms for what flows through them. It has no answer for how the pipe itself was built.

So the jury did something that reframed the entire debate. Instead of treating Instagram and YouTube as neutral spaces, they treated them as products. And that changes the standard entirely. A product does not have to guarantee harm to be held accountable. It only needs to make harm reasonably foreseeable, and still continue without adequate safeguards.

The jury found that threshold had been crossed. The verdict came with $6 million in damages. But for companies the size of Meta and YouTube, that is a rounding error.

So it raises the question: why fight it at all? After all, platforms like TikTok and Snapchat had quietly settled similar cases before they ever reached a verdict.

The answer is that this was never about the $6 million. It was about what a loss would mean.

To understand that, think about a factory that dumps waste into a river. The factory profits while the town downstream pays for the clean-up. The damage is real, but it never shows up on the factory's balance sheet.

Social media platforms operate in a similar way. The ad revenue, the engagement, the targeting — that stays with the platform. But the anxiety, the depression, the healthcare bills, the lost productivity — those get quietly absorbed by families, schools, hospitals, and governments. The platform books the profit. Society pays the cost.

Economists call this a negative externality. And for a long time, social media companies did not have to account for it.

The tobacco industry once had a similar arrangement. Cigarette companies sold a product they knew was addictive, while governments around the world picked up the healthcare tab. It took decades, and a specific legal shift, before those companies were made to answer for the costs they had long offloaded onto others. The turning point was not the cigarettes. It was proving in court that the companies already knew what their product was doing.

This verdict follows the same logic.

The internal documents showed that the companies knew their design was habit-forming. And yet, they continued to optimise for it. When the jury chose to treat the platform as a product rather than a passive platform, those hidden costs finally had someone to send a bill to.

Because a deeper problem for these companies is that here, the design itself becomes the product. The algorithm is the revenue engine. If the design becomes legally liable, the very thing that makes them money becomes the source of their legal risk.

Every lawsuit that follows this verdict will use the same argument. And there is no Section 230 defence waiting at the end of it.

Which brings us back to where we started. Remember that half hour you lost on Instagram without quite knowing how?

Well, it turns out someone designed it that way. Someone optimised it, tested it, and shipped it knowing what it could do. And for a long time, the cost of that decision was invisible and spread quietly across living rooms, classrooms, and hospital waiting rooms.

Until next time…

Liked this story? Share it with someone who can’t stop doomscrolling but doesn’t know why on WhatsApp, LinkedIn and X.